what does accuracy refer to when discussing data measurement

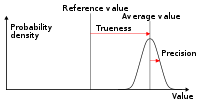

Accuracy and precision are two measures of observational error. Accurateness is how close or far off a given set of measurements (observations or readings) are to their true value, while precision is how shut or dispersed the measurements are to each other.

In other words, precision is a description of random errors, a measure of statistical variability. Accurateness has two definitions:

- More ordinarily, it is a description of but systematic errors, a measure out of statistical bias of a given measure out of key tendency; depression accuracy causes a difference betwixt a result and a true value; ISO calls this trueness .

- Alternatively, ISO defines[1] accuracy as describing a combination of both types of observational error (random and systematic), so high accuracy requires both high precision and loftier trueness.

In the first, more mutual definition of "accuracy" above, the concept is independent of "precision", so a item gear up of information tin can exist said to be accurate, precise, both, or neither.

In simpler terms, given a statistical sample or prepare of data points from repeated measurements of the same quantity, the sample or fix can be said to be accurate if their boilerplate is shut to the truthful value of the quantity being measured, while the fix can exist said to be precise if their standard divergence is relatively small.

Common technical definition [edit]

Accuracy is the proximity of measurement results to the true value; precision is the degree to which repeated (or reproducible) measurements under unchanged conditions evidence the same results.

In the fields of science and engineering, the accuracy of a measurement system is the degree of closeness of measurements of a quantity to that quantity's true value.[2] The precision of a measurement system, related to reproducibility and repeatability, is the degree to which repeated measurements under unchanged conditions show the same results.[2] [3] Although the ii words precision and accuracy tin can exist synonymous in vernacular use, they are deliberately contrasted in the context of the scientific method.

The field of statistics, where the interpretation of measurements plays a central part, prefers to use the terms bias and variability instead of accuracy and precision: bias is the amount of inaccuracy and variability is the amount of imprecision.

A measurement system can exist accurate but not precise, precise just not accurate, neither, or both. For example, if an experiment contains a systematic error, and so increasing the sample size generally increases precision merely does non better accuracy. The result would be a consistent yet inaccurate string of results from the flawed experiment. Eliminating the systematic error improves accuracy simply does not alter precision.

A measurement system is considered valid if information technology is both accurate and precise. Related terms include bias (non-random or directed effects caused by a cistron or factors unrelated to the independent variable) and error (random variability).

The terminology is also practical to indirect measurements—that is, values obtained by a computational procedure from observed data.

In improver to accuracy and precision, measurements may also have a measurement resolution, which is the smallest change in the underlying physical quantity that produces a response in the measurement.

In numerical assay, accuracy is also the nearness of a calculation to the true value; while precision is the resolution of the representation, typically defined past the number of decimal or binary digits.

In armed services terms, accuracy refers primarily to the accuracy of fire (justesse de tir), the precision of fire expressed past the closeness of a grouping of shots at and around the centre of the target.[iv]

Quantification [edit]

In industrial instrumentation, accuracy is the measurement tolerance, or transmission of the instrument and defines the limits of the errors made when the instrument is used in normal operating atmospheric condition.[5]

Ideally a measurement device is both accurate and precise, with measurements all close to and tightly clustered around the true value. The accuracy and precision of a measurement process is unremarkably established past repeatedly measuring some traceable reference standard. Such standards are defined in the International System of Units (abbreviated SI from French: Système international d'unités) and maintained by national standards organizations such as the National Plant of Standards and Engineering science in the Usa.

This also applies when measurements are repeated and averaged. In that case, the term standard error is properly practical: the precision of the average is equal to the known standard departure of the procedure divided by the foursquare root of the number of measurements averaged. Further, the cardinal limit theorem shows that the probability distribution of the averaged measurements volition exist closer to a normal distribution than that of individual measurements.

With regard to accuracy we can distinguish:

- the difference between the mean of the measurements and the reference value, the bias. Establishing and correcting for bias is necessary for calibration.

- the combined outcome of that and precision.

A common convention in science and engineering is to express accurateness and/or precision implicitly by means of significant figures. Where non explicitly stated, the margin of mistake is understood to exist half the value of the last significant place. For instance, a recording of 843.6 m, or 843.0 m, or 800.0 thou would imply a margin of 0.05 1000 (the last significant place is the tenths identify), while a recording of 843 thousand would imply a margin of error of 0.5 m (the last pregnant digits are the units).

A reading of 8,000 chiliad, with trailing zeros and no decimal point, is ambiguous; the trailing zeros may or may not be intended as pregnant figures. To avoid this ambivalence, the number could exist represented in scientific notation: eight.0 × 103 k indicates that the first zero is significant (hence a margin of 50 thou) while eight.000 × 103 m indicates that all three zeros are significant, giving a margin of 0.v thousand. Similarly, one can use a multiple of the basic measurement unit of measurement: eight.0 km is equivalent to 8.0 × xthree m. It indicates a margin of 0.05 km (50 thousand). All the same, reliance on this convention can lead to false precision errors when accepting information from sources that exercise non obey it. For example, a source reporting a number like 153,753 with precision +/- five,000 looks like it has precision +/- 0.5. Under the convention information technology would have been rounded to 154,000.

Alternatively, in a scientific context, if it is desired to point the margin of mistake with more precision, one can use a notation such as 7.54398(23) × 10−10 m, meaning a range of between 7.54375 and 7.54421 × x−10 m.

Precision includes:

- repeatability — the variation arising when all efforts are made to continue conditions constant by using the same instrument and operator, and repeating during a short time period; and

- reproducibility — the variation arising using the same measurement process amid unlike instruments and operators, and over longer fourth dimension periods.

In engineering, precision is often taken every bit 3 times Standard Deviation of measurements taken, representing the range that 99.73% of measurements tin occur within.[6] For example, an ergonomist measuring the human body can be confident that 99.73% of their extracted measurements fall within ± 0.7 cm - if using the GRYPHON processing system - or ± 13 cm - if using unprocessed data.[7]

ISO definition (ISO 5725) [edit]

According to ISO 5725-1, Accuracy consists of trueness (proximity of measurement results to the true value) and precision (repeatability or reproducibility of the measurement)

A shift in the significant of these terms appeared with the publication of the ISO 5725 serial of standards in 1994, which is also reflected in the 2008 issue of the "BIPM International Vocabulary of Metrology" (VIM), items 2.13 and 2.fourteen.[2]

According to ISO 5725-1,[ane] the full general term "accurateness" is used to draw the closeness of a measurement to the truthful value. When the term is applied to sets of measurements of the aforementioned measurand, it involves a component of random error and a component of systematic mistake. In this case trueness is the closeness of the hateful of a set of measurement results to the actual (true) value and precision is the closeness of agreement among a prepare of results.

ISO 5725-1 and VIM also avoid the employ of the term "bias", previously specified in BS 5497-1,[eight] because it has unlike connotations outside the fields of science and technology, as in medicine and law.

-

Low accuracy due to low precision

-

Low accuracy even with high precision

In binary classification [edit]

Accuracy is besides used as a statistical measure of how well a binary classification test correctly identifies or excludes a condition. That is, the accurateness is the proportion of right predictions (both truthful positives and truthful negatives) among the total number of cases examined.[9] Equally such, it compares estimates of pre- and post-exam probability. To make the context articulate past the semantics, it is often referred to as the "Rand accuracy" or "Rand index".[10] [11] [12] It is a parameter of the examination. The formula for quantifying binary accurateness is:

where TP = True positive; FP = False positive; TN = True negative; FN = False negative

Notation that, in this context, the concepts of trueness and precision as defined by ISO 5725-1 are non applicative. 1 reason is that in that location is not a single "truthful value" of a quantity, simply rather two possible true values for every case, while accuracy is an average across all cases and therefore takes into account both values. However, the term precision is used in this context to mean a different metric originating from the field of information retrieval (see below).

In psychometrics and psychophysics [edit]

In psychometrics and psychophysics, the term accurateness is interchangeably used with validity and abiding error. Precision is a synonym for reliability and variable error. The validity of a measurement instrument or psychological examination is established through experiment or correlation with beliefs. Reliability is established with a variety of statistical techniques, classically through an internal consistency test similar Cronbach's blastoff to ensure sets of related questions have related responses, and and then comparison of those related question between reference and target population.[ citation needed ]

In logic simulation [edit]

In logic simulation, a common mistake in evaluation of accurate models is to compare a logic simulation model to a transistor circuit simulation model. This is a comparing of differences in precision, not accuracy. Precision is measured with respect to detail and accuracy is measured with respect to reality.[13] [14]

In information systems [edit]

Information retrieval systems, such as databases and web search engines, are evaluated by many unlike metrics, some of which are derived from the defoliation matrix, which divides results into true positives (documents correctly retrieved), true negatives (documents correctly not retrieved), simulated positives (documents incorrectly retrieved), and false negatives (documents incorrectly not retrieved). Unremarkably used metrics include the notions of precision and recall. In this context, precision is defined as the fraction of retrieved documents which are relevant to the query (true positives divided by true+false positives), using a prepare of basis truth relevant results selected by humans. Call up is defined every bit the fraction of relevant documents retrieved compared to the total number of relevant documents (true positives divided by truthful positives+imitation negatives). Less ordinarily, the metric of accuracy is used, is divers every bit the total number of right classifications (true positives plus true negatives) divided by the total number of documents.

None of these metrics take into business relationship the ranking of results. Ranking is very of import for web search engines because readers seldom get past the outset page of results, and there are too many documents on the spider web to manually classify all of them as to whether they should be included or excluded from a given search. Calculation a cutoff at a particular number of results takes ranking into account to some degree. The measure precision at k, for instance, is a measure of precision looking only at the top ten (k=10) search results. More than sophisticated metrics, such as discounted cumulative gain, take into business relationship each individual ranking, and are more normally used where this is important.

See also [edit]

- Bias-variance tradeoff in statistics and machine learning

- Accepted and experimental value

- Data quality

- Engineering tolerance

- Exactness (disambiguation)

- Experimental doubt analysis

- F-score

- Hypothesis tests for accurateness

- Data quality

- Measurement doubt

- Precision (statistics)

- Probability

- Random and systematic errors

- Sensitivity and specificity

- Significant figures

- Statistical significance

References [edit]

- ^ a b BS ISO 5725-i: "Accurateness (trueness and precision) of measurement methods and results - Role one: General principles and definitions.", p.1 (1994)

- ^ a b c JCGM 200:2008 International vocabulary of metrology — Bones and general concepts and associated terms (VIM)

- ^ Taylor, John Robert (1999). An Introduction to Error Analysis: The Written report of Uncertainties in Physical Measurements. University Science Books. pp. 128–129. ISBN0-935702-75-X.

- ^ Due north Atlantic Treaty Organisation, Nato Standardization Agency AAP-six - Glossary of terms and definitions, p 43.

- ^ Creus, Antonio. Instrumentación Industrial [ citation needed ]

- ^ Black, J. Temple (21 July 2020). DeGarmo's materials and processes in manufacturing. ISBN978-1-119-72329-5. OCLC 1246529321.

- ^ Parker, Christopher J.; Gill, Simeon; Harwood, Adrian; Hayes, Steven G.; Ahmed, Maryam (2021-05-nineteen). "A Method for Increasing 3D Trunk Scanning's Precision: Gryphon and Sequent Scanning". Ergonomics. 65 (1): 39–59. doi:10.1080/00140139.2021.1931473. ISSN 0014-0139. PMID 34006206.

- ^ BS 5497-i: "Precision of exam methods. Guide for the determination of repeatability and reproducibility for a standard test method." (1979)

- ^ Metz, CE (October 1978). "Basic principles of ROC analysis" (PDF). Semin Nucl Med. eight (4): 283–98. doi:10.1016/s0001-2998(78)80014-ii. PMID 112681.

- ^ "Archived copy" (PDF). Archived from the original (PDF) on 2015-03-eleven. Retrieved 2015-08-09 .

{{cite web}}: CS1 maint: archived copy as title (link) - ^ Powers, David Thou. West. (2015). "What the F-measure doesn't measure". arXiv:1503.06410 [cs.IR].

- ^ David Thou W Powers. "The Trouble with Kappa" (PDF). Anthology.aclweb.org . Retrieved 11 December 2017.

- ^ Acken, John M. (1997). "none". Encyclopedia of Informatics and Engineering. 36: 281–306.

- ^ Glasser, Mark; Mathews, Rob; Acken, John Yard. (June 1990). "1990 Workshop on Logic-Level Modelling for ASICS". SIGDA Newsletter. 20 (i).

External links [edit]

- BIPM - Guides in metrology, Guide to the Expression of Doubtfulness in Measurement (GUM) and International Vocabulary of Metrology (VIM)

- "Across NIST Traceability: What really creates accuracy", Controlled Environments magazine

- Precision and Accurateness with Three Psychophysical Methods

- Appendix D.1: Terminology, Guidelines for Evaluating and Expressing the Uncertainty of NIST Measurement Results

- Accuracy and Precision

- Accurateness vs Precision — a cursory video by Matt Parker

- What's the difference between accuracy and precision? by Matt Anticole at TED-Ed

- Precision and Accuracy exam study guide

Source: https://en.wikipedia.org/wiki/Accuracy_and_precision

Post a Comment for "what does accuracy refer to when discussing data measurement"